MCP, UCP, and ACP are acronyms that have gradually been incorporated into the technological discourse of the hospitality sector as the adoption of artificial intelligence advances. Below, we will take a closer look at them to understand what they represent, the role they play in this new landscape, and why they are beginning to gain prominence in conversations around distribution and technology—despite the fact that this remains an uncertain and still-evolving territory.

When discussing the emergence of AI-driven assistants in hotel distribution, the commercial narrative is often built around a recurring premise: the alleged need for every hotel to adapt its direct booking funnel to this new context. This is a statement with important nuances that deserves careful analysis, applying—as is almost always the case in technology—logic and industry knowledge, in order to avoid poorly calibrated assumptions that could end up becoming a misstep, with unnecessary costs in both financial resources and time.

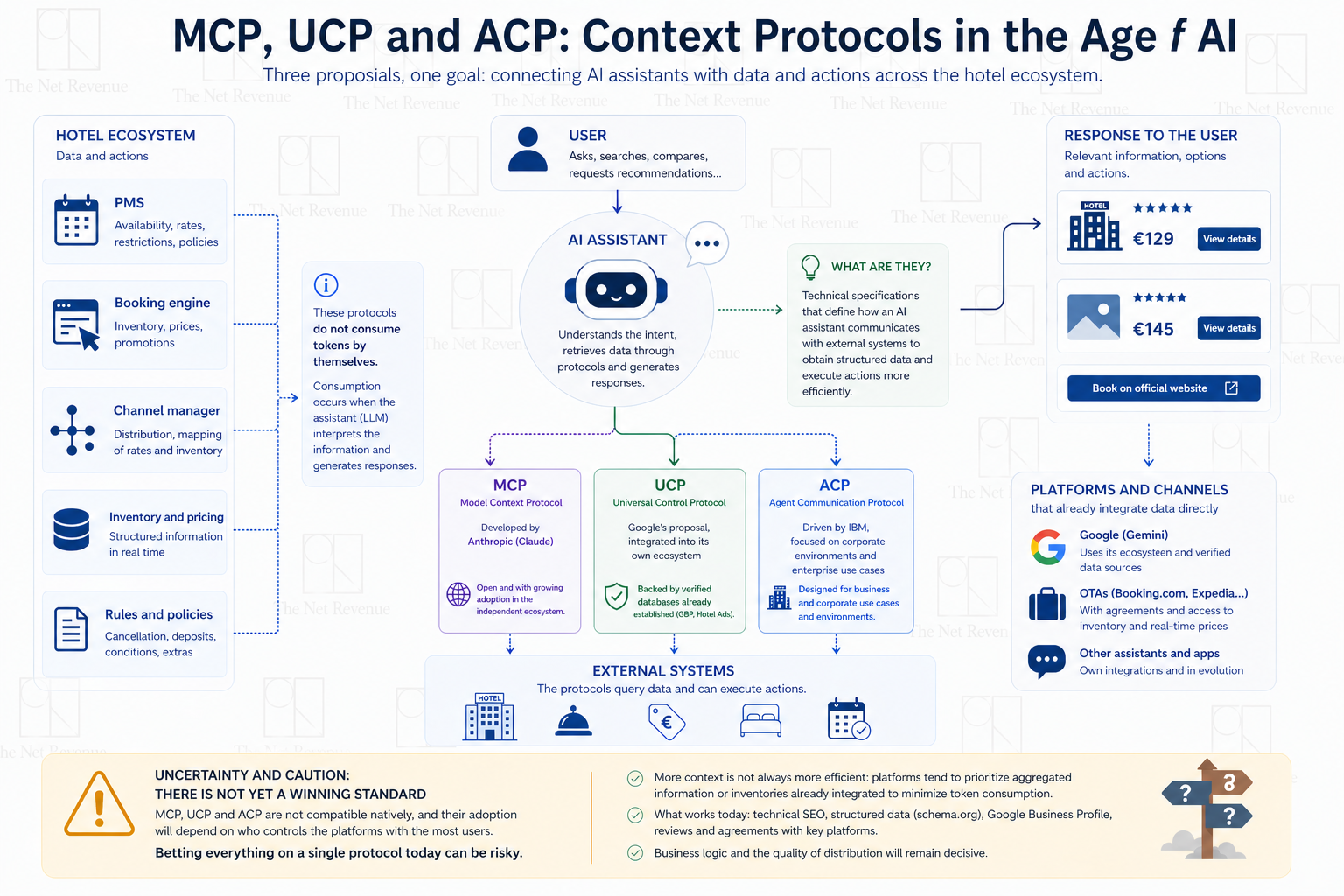

What are context protocols?

Context protocols are technical specifications designed to define how an artificial intelligence assistant communicates with external systems in order to retrieve structured data and execute actions more efficiently. Broadly speaking, they can be understood as a functional analogy to sitemap files in SEO: they do not generate content or intelligence themselves, but they make it easier for automated systems—in this case, AI assistants—to access and interpret relevant information in a structured way.

At present, the proposals attracting the most attention within the technology ecosystem are:

- MCP (Model Context Protocol), developed by Anthropic (Claude), open in nature and seeing growing adoption within the independent ecosystem.

- UCP (Universal Control Protocol), Google’s approach, integrated into its own ecosystem and supported by already well-established verified databases such as Google Business Profile or Hotel Ads.

- ACP (Agent Communication Protocol), promoted by IBM, with a stronger focus on corporate environments and enterprise use cases.

It is important to understand that none of these protocols consume tokens by themselves, although they do generate tokens indirectly when an AI model interacts with the information they expose. Token consumption occurs when the assistant incorporates this external data into its internal context to reason—counted as input tokens—and when it generates responses based on that data—output tokens. This cost increases as content becomes more extensive and detailed (full inventories, rate rules, policies, etc.) and multiplies in conversational flows with repeated calls. By contrast, neither the technical standard, nor the endpoint, nor the prior system-to-system exchange consumes tokens: a PMS can respond to an MCP request at no cost until an LLM interprets that information. This distinction is particularly relevant for the hotel sector because more context does not necessarily mean greater efficiency. Platforms that control access to AI have clear incentives to minimize token consumption and, in practice, tend to prioritize aggregated information or inventories already integrated at platform level over individual protocols for each hotel.

Anyone who has worked with professional uses of artificial intelligence, beyond basic tasks or flat-rate models, is well acquainted with the concept of tokens and their direct impact on costs and operational limits. In the context of large language models (LLMs), tokens are the smallest units of text that a model processes to understand and generate language, and they are one of the key factors shaping both technical architecture and the strategic decisions of the platforms that operate them.

Why should we be cautious when applying them in the current technology ecosystem?

The problem—and at the same time the greatest risk—of focusing strategy and budget on adopting a context protocol is that the three main models on the market pursue similar goals but are incompatible with one another. The standard that ultimately prevails will depend less on technical excellence than on who controls the platforms with the largest user bases. This is the classic pattern of any standards war: Betamax versus VHS, Amiga versus PC, cartridge versus CD‑ROM. The best technology does not win; the most widely distributed one does. Betting everything on a single protocol, with the high investment that entails, is at the very least risky at a time when there is still no clear winner.

It is true that AI assistants drastically reduce exploration time—from days to hours—which represents a tangible improvement for users. However, they do not substantially change the logic of the decision‑making process or where bookings ultimately materialize. Moreover, the business model at the lower stages of the funnel still does not work—neither for OpenAI nor for Google. And that deficit is fundamentally a platform problem, not a hotelier’s problem.

Claiming that businesses implementing an MCP infrastructure today will automatically be better positioned when assistants dominate automated discovery is a tempting analogy, but a conceptually weak one. In SEO, the standard was already settled: Google was the undisputed referee. In today’s ecosystem of AI agent protocols, the standard has yet to be decided. This does not invalidate MCP as a future bet, but it does make it naïve to present it as a strategic certainty when, in reality, it remains one hypothesis among several competing options.

As of today, AI assistants cannot guarantee reliable real‑time availability and pricing information, which creates uncertainty for users and pushes them back toward the traditional channels they already know. And the mechanism that is solving this limitation does not require hotels to implement anything themselves. Booking.com and Expedia have been integrating their inventories directly with ChatGPT and other assistants for months through agreements that individual hotels cannot replicate. When the consideration phase effectively shifts into the AI environment, it is most likely to be resolved by showing intermediary results with structured data, massive inventory, and real‑time pricing—the same pattern seen with Google. The direct channel gained visibility, yes, but while competing disadvantaged against intermediaries that were better prepared and had greater negotiating power.

It is worth remembering that semantic HTML and structured data from schema.org have been standards for years and today form the foundation on which LLMs build their responses. Active management of Google Business Profile feeds verified information directly into Google’s assistant. Presence on review platforms that assistants already consult systematically builds authority that is transferred into generated answers. These are decisions with proven impact today, not dependent on whether a specific technical standard eventually prevails.

That artificial intelligence will transform hotel distribution is not up for debate. But if we look at real data, it is worth noting the SparkToro and Datos (Semrush) study published in 2026, which debunks—at least for desktop and laptop usage—one of the most repeated mantras in the travel sector recently: the supposed rise of AI‑driven searches. An article we have already discussed previously and that can be consulted at the following link.

In the age of artificial intelligence, it may not be those who are most visible or who deploy the most technical layers who win, but—just as has historically happened in hotel distribution—those who best understand the logic of the system and manage their resources most wisely. Those with inventory, reputation, and solid agreements with the platforms users already rely on. Preparing the technical infrastructure is a legitimate bet. Presenting it as the only possible strategy or as a guarantee of future competitiveness, however, is an oversimplification that prudent hoteliers should evaluate with all the nuances on the table.